In many organizations, the Contact Center QA program is meant to develop frontline skills and improve service quality. Yet many fail because key parts of the process are missing or poorly designed.

This article is part of our Contact Center Management Series — a collection of articles that bring together practical guidance and insights to help Contact Centers run better and deliver stronger results.

If you put the jelly on before the peanut butter, the sandwich will fail. If you try to spread the peanut butter on the plate and then add the bread, it will fail even worse. Like so many things, the order is not optional. And yet we often do the easiest parts first, regardless of what the order of operations tells us. — Seth Godin

Whenever I teach any training course, I begin by asking participants what they want to learn or talk about. Their answers give me a sense of who they are and what their personal goals are for attending a training session.

For our course on How to Design a Quality Assurance Program, I can predict with unfailing accuracy what people will say.

The request I hear most often is: “I’d like to learn how to give better feedback when I talk about a call, email, or chat with our frontline people.”

That’s a completely reasonable request and we cover that in the final module of the course.

The request no one ever asks for is this:

“Dan, I’d like to learn how to improve what I talk about when I share feedback about a call, email, or chat with our frontline people.”

Participants — comprised most often of Quality Assurance professionals and Team Leaders — zero in on improving how to talk to their people.

Without questioning what they’re going to talk about.

But obviously you need both what you say and how you say it.

Talking to your people about unimportant things, or the wrong things — even in a compelling way — still won’t take them very far.

Which raises an important question: how should a quality assurance program be structured so that coaching conversations can actually improve performance?

Why Process Matters in Quality Assurance

Like most things in a Contact Center, quality improves when there is a robust and well-understood process.

Whether it’s forecasting volume, handling a complaint, or managing a quality assurance program, performance improves when the process is clear and well designed.

If you monitor conversations between your frontline folks and your customers, and find that the quality just isn’t up to standard, the root causes can lie at multiple points in the process.

In my experience, the best QA professionals resist the temptation to blame frontline employees. Instead, they use a diagnostic lens to identify barriers and opportunities.

Because your customers aren’t the only stakeholders served by your quality assurance process.

You’ve got to be thinking about how you can better serve your frontline people too.

Where Quality Assurance Programs Break Down

In practice, the barriers that prevent QA programs from improving service quality tend to appear in predictable places.

Barrier 1 — Lack of a Customer Service Vision

Many QA programs begin by selecting performance standards for the monitoring form.

But a strong QA program begins somewhere else entirely — with a customer service vision. A clear description of the kind of service we deliver around here.

When that vision is missing, QA professionals end up choosing standards somewhat arbitrarily. And their quality program becomes a collection of disconnected standards rather than a coherent definition of great service.

What this means for your frontline people.

If you ask a contact center or service counter professional, “What kind of service do we deliver around here?” they should be able to answer that question without hesitation.

A clear service vision does more than set guardrails around what matters most. It helps frontline people understand the kind of experience they are trying to create.

Even better, it can inspire them to think of new ways to bring those behaviors to life in customer interactions.

Barrier 2 — Weak or Excessive Performance Standards

Once a service vision is clear, the next step is deciding which performance standards belong on the monitoring form.

This is where many QA programs run into trouble.

Some forms contain far too many standards — twenty, thirty, sometimes even more.

A design with too many standards creates scoring fatigue and cognitive overload for frontline people.

Other times, the performance standards selected focus heavily on small behaviors such as whether the agent avoided saying “um,” or kept pauses within a certain length.

These kinds of weakly chosen standards may be easy to measure, but they don’t represent the behaviors that matter most to a customer.

Customers rarely remember whether an agent avoided saying “um.”

They remember whether the person on the other end of the conversation understood them and helped solve their problem.

What this means for your frontline people.

When a monitoring form becomes overloaded with too many or weakly selected standards, the focus of the quality program shifts.

Instead of helping agents develop stronger service skills, the program becomes a mechanism for catching mistakes.

Coaches turn into police officers, there to create a culture of compliance and correction. Rather than a culture of learning and growth.

Not because that is their natural inclination, but because the design of the QA program leads them there.

Barrier 3 — Lack of a Quality Handbook

I’m still surprised when participants tell me they don’t have a formal Quality Handbook.

The monitoring form becomes, almost by default, the core formal documentation of the quality program.

Each standard appears as a short title on the scorecard, and evaluators are expected to interpret those standards largely on their own.

But a short description on a monitoring form is not enough to create a consistent quality process.

The monitoring form is only a shorthand representation of quality. The real guidance lives in the documentation behind it.

In strong QA programs, you’ll find a Quality Handbook — a shared reference explaining how each performance standard should be understood and evaluated.

The better Quality Handbooks even cover the purpose of the program, its scope, and how it evolves over time.

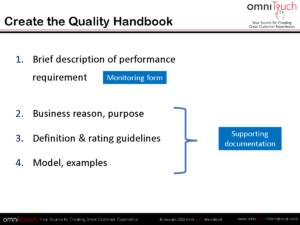

In the training programs we run, we share the difference between the monitoring form itself and the supporting documentation that sits behind it.

What a Quality Handbook Should Include

In practice, documentation usually includes four key elements:

A brief description of the performance requirement This is the short standard name that appears on the monitoring form.

The business reason or purpose behind the standard Why does this behavior matter to the customer or the organization?

Clear definitions and rating guidelines What is the clear, not overlong definition of the standard? What specifically earns a “Yes” or “No” for a compliance standard? What distinguishes between the scores of “Excellent,” “Good,” or “Fair” for caliber-based behaviors?

Models and examples Illustrations of what strong and weak performance sound like in real conversations — supported by example libraries of calls, chats, or emails.

Without this level of documentation, evaluators are left to interpret standards themselves — bringing their own assumptions and biases.

Over time, the quality program begins to drift.

Another advantage of preparing a Quality Handbook is that it is a journey, not just a destination.

The process of documenting standards and scoring logic often reveals where we need to think more deeply about them and clear up potential points of confusion.

Without a single, clear source of truth, how can we realistically expect to scale a quality program with any level of consistency or effectiveness?

What this means for your frontline people.

When performance standards are not clearly defined, feedback can feel subjective or inconsistent.

Agents may hear different interpretations of the same standard depending on who reviewed the conversation.

And when expectations are unclear, it becomes much harder to understand what good performance actually looks like — or how to improve.

Barrier 4 — A Monitoring Form is Not a Coaching Tool

Even when organizations establish a clear service vision, meaningful standards, and a well-documented quality handbook, another barrier often appears.

There is still a widespread assumption that the monitoring form itself — the scorecard with its long list of standards and scores — is enough to improve an individual’s quality performance.

The logic seems deceptively straightforward:

- Send the frontline professional the completed scorecard

- The frontline professional sees where they scored well and where they didn’t

- The frontline professional corrects their behavior

- Quality improves

But in practice, this assumption rarely holds true.

Scorecards Measure Performance — They Don’t Teach Skills

A monitoring form is a measurement instrument, not inherently a learning instrument.

It tells you where to play.

It does not tell you how to win.

Scorecards are excellent diagnostic tools.

They highlight which areas of a conversation deserve attention.

But they do not teach or coach the skills required to improve those areas.

Knowing that empathy was missing is not the same as knowing how to express empathy in a difficult conversation.

Seeing that “clarity of explanation” scored poorly does not show someone how to structure a better explanation the next time.

This challenge may become even more pronounced as AI-assisted scoring becomes more common.

While these tools can generate more observations and data, they cannot replace the leadership judgment and coaching conversations required to help people grow.

Learning doesn’t scale linearly with the volume of information

The more corrections we give, the less likely any one correction will be retained.

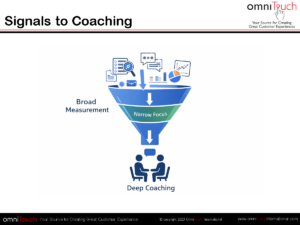

Let’s ask ourselves a practical question: how many behaviors can a frontline professional realistically improve at one time?

The real coaching skill lies in converting signals from the broad monitoring form into one or two deep coaching behaviors a frontline professional can focus on improving.

And then guide them toward mastering that skill.

What this means for your frontline people.

When the monitoring form is treated as the coaching tool, frontline professionals are left to interpret the feedback, select which skill gaps to work on, and close their gaps on their own.

They may understand that something went wrong, but not what specific change would improve the next conversation.

Over time, the quality program feels less like a system for developing people and more like a mechanism for evaluating them.

When a score or grade is attached, the feedback is by definition evaluative rather than developmental.

And when people feel evaluated but not coached, meaningful improvement becomes much harder to achieve.

Barrier 5 — A Lack of Performance Improvement Feedback

Much of the coaching we see in the industry revolves around discussing scores.

But when a score or grade is attached, the feedback is by definition evaluative rather than developmental.

That point is so fundamental and so important.

What about performance improvement feedback?

Performance improvement feedback can change people’s lives.

As leaders, performance improvement conversations help our people continue what they are doing well while offering clear guidance on where and how they can improve.

And let’s remember that people cannot effectively change too many behaviors at once.

We must use our judgment to decide what to focus on, and our skill in how we talk about it.

The better we are at these conversations — and the more consistently we hold them — the better the quality outcomes.

What this means for your frontline people.

In most contact centers, the teams with the strongest and most consistent quality have a superb coach — usually the team leader — who delivers regular performance improvement feedback.

Not just sends their people scorecards.

Their conversations involve praise for what’s going well and constructive advice for what can be improved.

A Closing Reflection

You hear the phrase human touch all the time these days.

As long as we’re celebrating the humanity we bring to each other, perhaps it’s worth revisiting our QA programs and asking whether they truly serve the human beings in our care.

The frontline people who work for us and have the often unenviable job of dealing with guests, customers, passengers, patients, clients, and others.

Helping them improve is one of the most human things a quality program can do.

Thank You for Reading

I regularly share stories, strategies, and insights from our work in Contact Centers, Customer Service, and Customer Experience. If this resonates, I’d love to stay connected.

You can drop me a line anytime, or subscribe on our site.

Daniel Ord

[email protected]

www.omnitouchinternational.com

Banner photo by Laura Parashivescu on Unsplash